If I have to explain what gives me energy as an engineer, I would not start with technology. I would start with people.

The moment that gives me the most energy is not when I provide the solution. It is when someone else figures it out, understands why it works, and is proud of what they built.

Two recent experiences made that very clear to me.

The first was a GitLab Co-Create week with students and lecturers at Odisee (a university of applied sciences with a campus in Ghent), where we explored the GitLab Duo Agent Platform in a hands-on setup.

The second was the GitLab AI Hackathon: nearly 7,000 developers, 600+ agent and flow submissions in the AI Catalog, and six weeks of seeing intense building by all the participants.

Different scale, same dynamic.

People got curious fast, shared approaches, and helped each other make progress. You could see ideas move from rough concept to working prototype very fast.

What I saw during Co-Create week

Before the week started

The interesting part started before the week itself.

Together with the Odisee team, I helped prepare the full setup upfront: self-hosted GPU infrastructure, model selection, GitLab configuration, runners, and the integration path between all components. It was not a lightweight demo environment. It was a serious production-grade setup with real architectural decisions and operational constraints.

That preparation with the lecturers mattered for another reason too. My role there was not to arrive with a complete blueprint and hand over “the right setup”. In several parts, we explored options together, validated trade-offs, and iterated quickly. Sometimes I was closer to a technical sparring partner or rubber duck than to a person giving final answers.

That helped avoid the classic trap of feeling lost in docs and disconnected implementation paths. Instead, we built enough shared confidence and direction to move forward pragmatically, both before as during the Co-Create week.

The 45-minute deep dive

During the week, there were many moments where I shared bits and pieces of the Duo Agent Platform instead of front-loading everything at once. That made it possible to adapt the knowledge-sharing to what students were most interested in, or to the specific issues they were running into.

One session went very deep and technical into the full end-to-end flow in Duo Agent Platform: what happens from the moment you chat with an agent or trigger a flow, checkpoint storage, tool calling, inference evaluation, authentication, … and many other moving parts under the hood.

It took around 45 minutes and, honestly, this kind of topic can become dry very quickly. But the room stayed fully focused. Afterwards, the students asked detailed and thoughtful technical questions. They did not only want to use the platform. They wanted to understand it properly.

That depth mattered. Once they understood the mechanics, several constraints they had run into earlier suddenly made sense.

From explanation to peer ownership

After that session, students pushed far beyond the examples. Some needed half a sentence and were off building immediately. Others wanted deeper context first. Both approaches worked.

The most valuable pattern was peer-to-peer knowledge transfer. If I explained a specific issue to one team and another team hit the same problem later, I connected them instead of repeating the explanation myself. That forced students to explain their reasoning to peers, which reinforced their own understanding and helped the whole group move faster.

Minimum guidance, maximum ownership.

In the end, every team built something meaningful, even when they hit platform constraints.

What the AI hackathon reinforced

The GitLab AI hackathon showed the same pattern at global scale.

When thousands of people build in parallel, they naturally pressure-test the full experience: workflows, defaults, docs, examples, and assumptions.

In this case, my role was mainly on the organizing side: helping run the event and supporting participants through channels like Discord, rather than working one-on-one on implementations.

That kind of real usage signal was still incredibly valuable. Through questions, reports, and shared experiments from participants, we could see where guidance, onboarding, or behavior could be clearer.

During the hackathon, we were able to turn several of those insights into concrete improvements while the event was still running.

Examples of that loop in practice:

- clearer onboarding guidance for common first-run pitfalls

- sharper examples around multi-step agent flows

- fixes and adjustments to rough edges surfaced through participant feedback

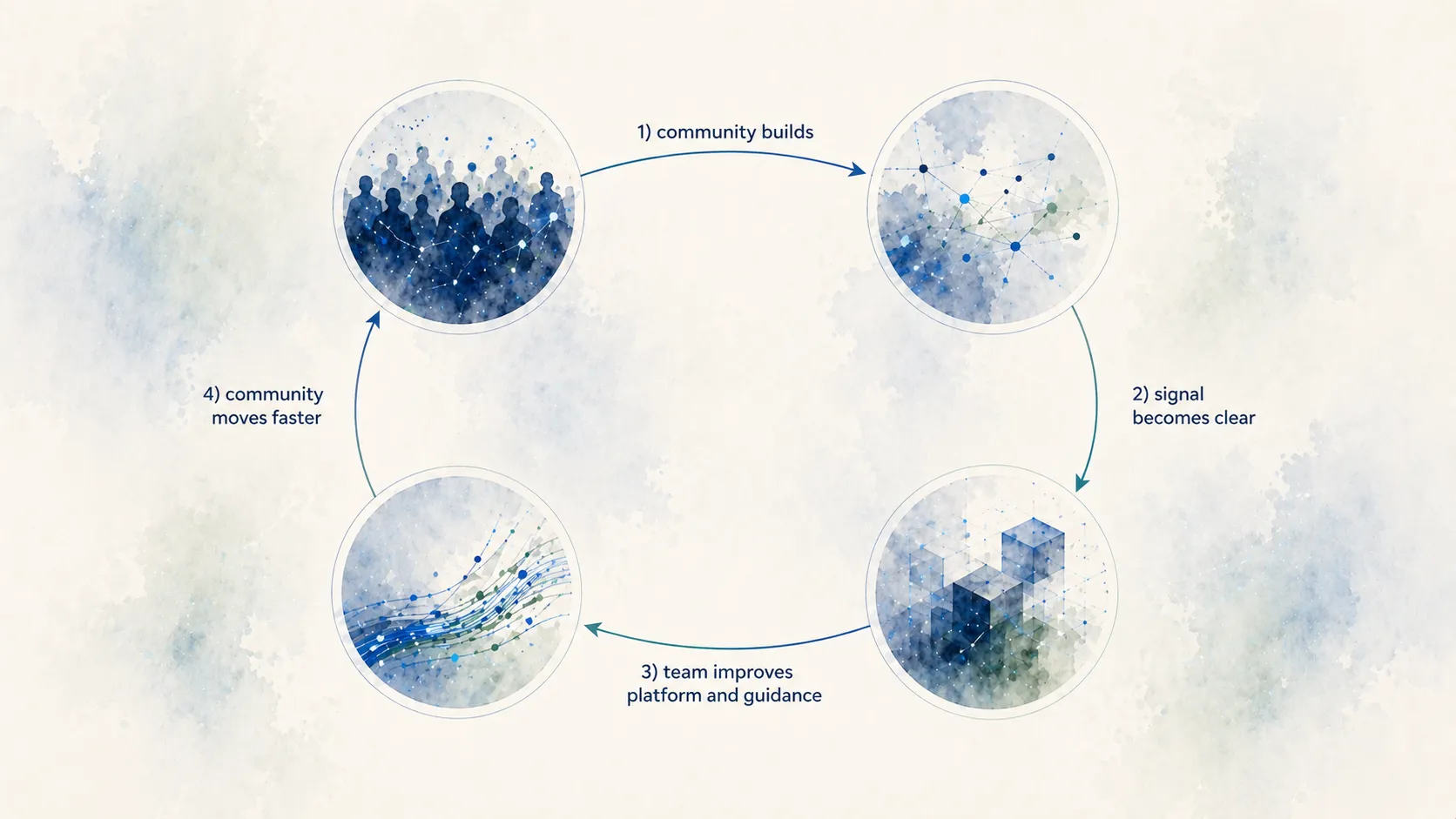

For me, that is one of the best possible loops:

Why this matters to me

I still love the architecture and engineering side: systems thinking, implementation details, and getting things reliable under real constraints.

But I now understand more clearly what type of work I want to optimize for: creating the conditions where others can learn, build, and grow ownership.

Both the hackathon and the Co-Create week reinforced that for me. Very different contexts, same signal: people move faster and further when you do not hand them answers, but help them develop their own path to one.

Looking back, this is also why changing direction six months ago made sense for me.

I moved toward an environment where this kind of enablement is not a side effect of the work, it is the work.

That is exactly what I now get to do in the DevRel Engineering team at GitLab: build, explain, unblock, and help people become autonomous.

Afterthoughts

One aspect from the Odisee week that deserves its own follow-up is the local infrastructure setup. Running models locally on GPUs hosted in your own data center is not only a principle, it is a practical option. It comes with complexity, but it is viable and it can make sense for institutions that care about control, privacy, and internal capability building.

I will likely write a dedicated follow-up on the infrastructure part, and on how it connects to a broader agentic engineering approach: not only model quality, but also platform design, workflow reliability, and ownership by the teams using it.